In recent years, open-source efforts like Señorita-2M have propelled video editing toward

natural language instruction. However, current publicly available datasets predominantly focus on

local editing or style transfer, which largely preserve the original scene structure and are easier

to scale. In contrast, Background Replacement, a task central to creative applications

such as film production and advertising, requires synthesizing entirely new, temporally consistent

scenes while maintaining accurate foreground-background interactions, making large-scale data

generation significantly more challenging. Consequently, this complex task remains largely

underexplored due to a scarcity of high-quality training data. This gap is evident in poorly

performing state-of-the-art models, e.g., Kiwi-Edit, because the primary open-source

dataset that contains this task, i.e., OpenVE-3M, frequently produces static, unnatural

backgrounds.

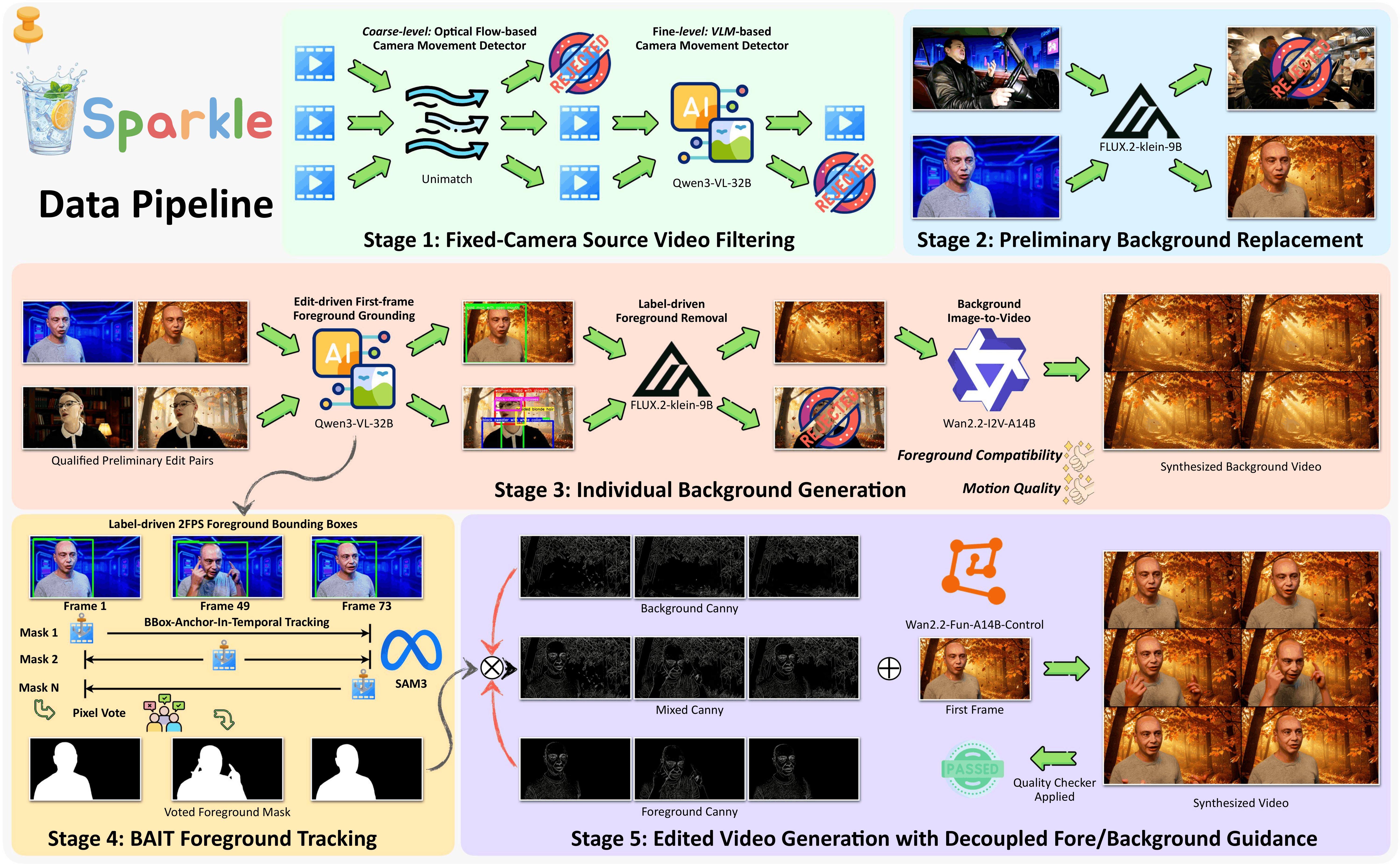

In this paper, we trace this quality degradation to a lack of precise background guidance

during data synthesis. Accordingly, we design a scalable pipeline that generates foreground and

background guidance in a decoupled manner with strict quality filtering. Building on this pipeline,

we introduce Sparkle, a dataset of ~140K video pairs spanning five common background-change

themes, alongside Sparkle-Bench, the largest evaluation benchmark tailored for background

replacement to date. Experiments demonstrate that our dataset and the model trained on it achieve

substantially better performance than all existing baselines on both OpenVE-Bench and Sparkle-Bench.

Our proposed dataset, benchmark, and model are fully open-sourced at

github.com/showlab/Sparkle.

:

Realizing Lively Instruction-Guided

:

Realizing Lively Instruction-Guided