UENR-600K

A Physically Grounded Dataset for Nighttime Video Deraining

2National University of Singapore

600K paired frames rendered in Unreal Engine 5.3 with physically grounded rain simulation.

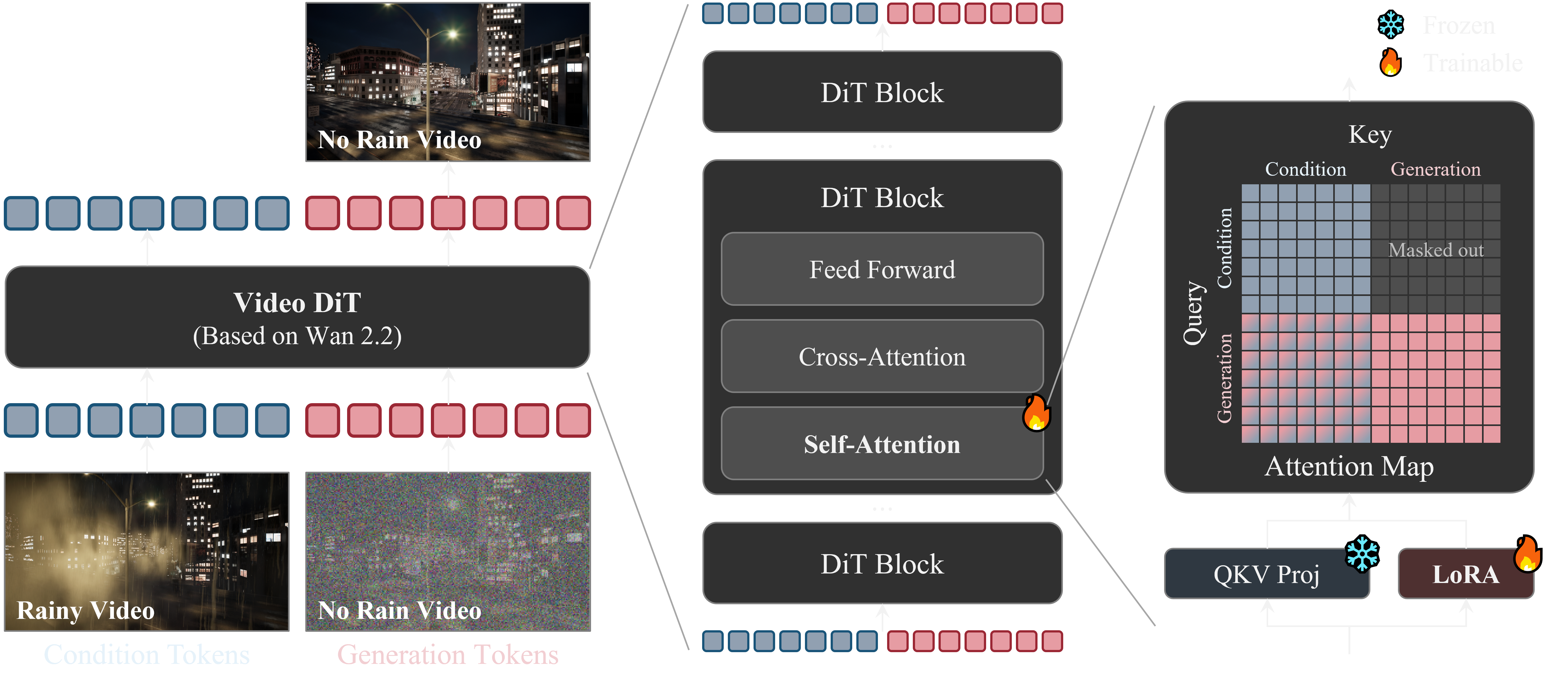

Video diffusion model achieving 94% preference rate on real nighttime rain videos.