AssistGUI

AssistGUI

Graphical User Interface (GUI) automation holds significant promise for assisting users with complex tasks, thereby boosting human productivity. Existing works leveraging Large Language Model (LLM) or LLM-based AI agents have shown capabilities in automating tasks on Android and Web platforms. However, these tasks are primarily aimed at simple device usage and entertainment operations. This paper presents a novel benchmark, AssistGUI, to evaluate whether models are capable of manipulating the mouse and keyboard on the Windows platform in response to user-requested tasks. We carefully collected a set of 100 tasks from nine widely-used software applications, such as, After Effects and MS Word, each accompanied by the necessary project files for better evaluation. Moreover, we propose an advanced Actor-Critic Embodied Agent framework, which incorporates a sophisticated GUI parser driven by an LLM-agent and an enhanced reasoning mechanism adept at handling lengthy procedural tasks. Our experimental results reveal that our GUI Parser and Reasoning mechanism outshine existing methods in performance. Nevertheless, the potential remains substantial, with the best model attaining only a 46% success rate on our benchmark. We conclude with a thorough analysis of the current methods' limitations, setting the stage for future breakthroughs in this domain.

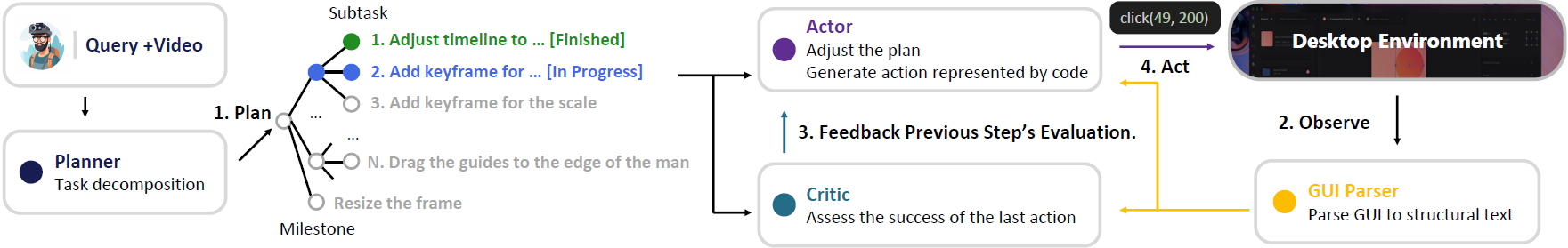

AssistGUI iteratively employs a Planner, a GUI Parser, a Critic module for action assessment, and an Actor module for adjusting the plan and generating code for controlling the desktop, sequentially completing subtasks until the task is finished.

@article{gao2023assistgui,

title = {AssistGUI: Task-Oriented Desktop Graphical User Interface Automation},

author = {Difei Gao and Lei Ji and Zechen Bai and Mingyu Ouyang and Peiran Li and Dongxing Mao and Qinchen Wu and Weichen Zhang and Peiyi Wang and Xiangwu Guo and Hengxu Wang and Luowei Zhou and Mike Zheng Shou},

year = {2023},

}